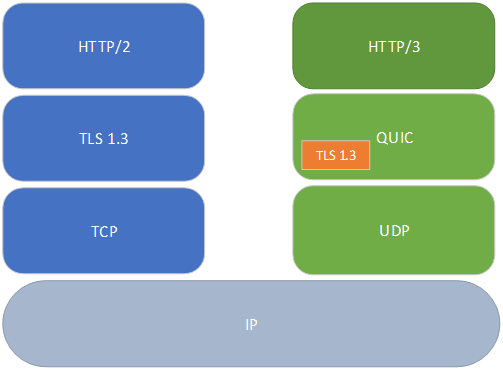

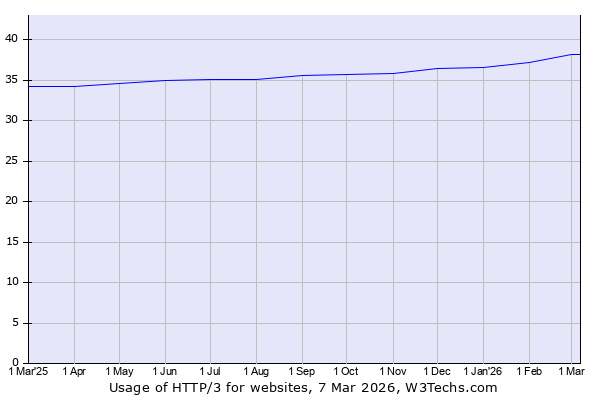

In 2021, the IETF published RFC 9000, formally standardizing QUIC—a transport protocol that fundamentally rethinks how data moves across the internet. By May 2024, over 12 million IPv4 addresses were responding to QUIC handshakes, and HTTP/3 now powers roughly 36% of all websites. This wasn’t an incremental improvement. QUIC abandoned TCP entirely, building a new transport on UDP to solve problems that had accumulated over four decades of internet evolution.

The Head-of-Line Blocking Problem That Couldn’t Be Fixed

TCP was designed in the 1970s as a reliable, ordered byte stream. Every byte transmitted has a sequence number, and the receiver must acknowledge them in order. This design creates a fundamental vulnerability: when a single packet is lost, all subsequent data—regardless of which application stream it belongs to—waits for retransmission.

HTTP/2 attempted to work around this by multiplexing multiple requests over a single TCP connection. Instead of opening six parallel connections like HTTP/1.1, browsers could send dozens of requests through one pipe. The theory was sound: eliminate connection overhead, improve congestion control, and reduce latency. In practice, HTTP/2 made the head-of-line blocking problem worse.

When packet loss occurs on an HTTP/2 connection, every multiplexed stream stalls. A lost packet containing image data blocks the delivery of CSS, JavaScript, and HTML that happened to be transmitted afterward. The more streams multiplexed, the more collateral damage from each loss event. Research from Salesforce Engineering demonstrated that HTTP/2’s multiplexing actually increases the probability and impact of head-of-line blocking because more data depends on a single connection’s integrity.

Image source: Salesforce Engineering

QUIC’s solution was architectural: eliminate TCP’s monolithic sequence number space entirely. Each QUIC stream maintains its own delivery state. When packet loss affects stream A, streams B, C, and D continue delivering data independently. The lost packet only impacts the stream whose data it carried.

Merging the Handshake: From 3 RTTs to 1

Before QUIC, establishing a secure HTTPS connection required two separate handshakes. TCP’s three-way handshake consumed one round-trip time (RTT). TLS 1.3 then added another 1-2 RTTs for key exchange and certificate verification. A client in New York connecting to a server in Singapore—with roughly 200ms RTT—would spend 400-600ms just establishing the connection before sending a single byte of application data.

QUIC merged these handshakes into a single exchange. The initial QUIC packet contains both transport parameters and the TLS ClientHello. The server responds with its TLS ServerHello, certificate chain, and finished message—all in packets that also serve as transport-layer acknowledgments. The result: a complete, encrypted connection in exactly one RTT.

Image source: APNIC Blog

Cloudflare’s measurements showed HTTP/3 achieving 176ms time-to-first-byte versus 201ms for HTTP/2—a 12.4% improvement. For subsequent connections, QUIC’s 0-RTT feature allows clients to send encrypted application data immediately using pre-shared keys from previous sessions, eliminating the handshake entirely for known servers.

This 0-RTT capability comes with security trade-offs. Because the server hasn’t contributed fresh entropy to the session, early data is vulnerable to replay attacks. The QUIC specification mandates that applications explicitly opt-in to accepting 0-RTT data and implement replay detection. Financial transactions, authentication requests, and other non-idempotent operations should never use 0-RTT.

Connection Migration: Breaking the 5-Tuple Dependency

TCP identifies connections by a 5-tuple: source IP, source port, destination IP, destination port, and protocol. Any change to these values—common when mobile devices switch between Wi-Fi and cellular networks—terminates the connection. The application must re-establish state, repeat authentication handshakes, and restart in-flight data transfers.

QUIC introduced connection IDs: opaque identifiers negotiated during the handshake that persist regardless of network path changes. When a mobile client transitions from home Wi-Fi to cellular data, it simply continues sending QUIC packets with the same connection ID from its new IP address. The server validates the new path through a challenge-response mechanism (PATH_CHALLENGE and PATH_RESPONSE frames) and resumes the session.

sequenceDiagram

participant Client

participant Server

Note over Client,Server: Initial Connection (WiFi)

Client->>Server: QUIC Initial (Connection ID: ABC123)

Server->>Client: QUIC Handshake Response

Note over Client,Server: Network Switch to Cellular

Client->>Server: QUIC Packet (Connection ID: ABC123, New IP)

Server->>Client: PATH_CHALLENGE (validate new path)

Client->>Server: PATH_RESPONSE

Note over Client,Server: Connection Resumed Seamlessly

A 2024 Internet-wide scan by researchers revealed that connection migration support remains uneven. Among IPv6 targets with proper SNI (Server Name Indication), approximately 78% supported migration. For IPv4, the figure dropped to 52%. Major providers like Cloudflare and Google have yet to enable migration universally, often due to load balancer architecture that routes packets by 5-tuple rather than connection ID.

Encrypted by Default: Hiding Transport Metadata

Every TCP header traversing the internet exposes sequence numbers, acknowledgment numbers, and window sizes in plaintext. Network operators, ISPs, and middleboxes have long used this visibility for traffic shaping, quality of service enforcement, and surveillance. QUIC encrypts virtually all transport metadata—including packet numbers and most frame types—leaving only the connection ID and minimal framing visible.

This design prevents protocol ossification. TCP’s evolution stalled because middleboxes made assumptions about header formats; when TCP attempted to add options like selective acknowledgments (SACK) or window scaling, deployed hardware would drop or corrupt non-conformant packets. TLS 1.3 faced similar deployment challenges before workarounds like “grease” extensions were added. QUIC’s encryption prevents middleboxes from parsing and interfering with transport mechanics.

The trade-off is operational visibility. Network engineers can no longer diagnose packet loss, reordering, or congestion by inspecting traffic. Wireshark can decode QUIC packets, but only with access to session keys—a requirement that defeats passive monitoring. This has led some enterprise security vendors to recommend blocking QUIC entirely, forcing fallback to TCP/TLS where traffic can be inspected.

Userspace Congestion Control: Flexibility vs Performance

QUIC implementations run in userspace, not the kernel. This was a deliberate design choice: applications can update congestion control algorithms, implement custom loss recovery, and experiment with new features without waiting for operating system patches. A browser can ship BBR congestion control to millions of users overnight; the same change in TCP would require kernel updates across Windows, Linux, macOS, Android, and iOS.

The performance penalty is real. Kernel TCP benefits from decades of optimization: zero-copy socket APIs, TCP segmentation offload (TSO), and hardware-accelerated checksums. QUIC implementations must encrypt and decrypt every packet in userspace, copy data between application and kernel buffers, and implement congestion control in application code. Benchmarks from 2024 showed QUIC implementations sometimes 50-100x slower than kernel TCP in ideal network conditions.

Image source: Michelin Blog

However, in challenging network conditions—high latency, packet loss, or jitter—QUIC’s architectural advantages dominate. Studies consistently show QUIC outperforming TCP when packet loss exceeds 1%. At 5% packet loss, QUIC can deliver twice the throughput of TCP. For mobile users on unreliable networks, the userspace overhead becomes negligible compared to the gains from independent stream recovery and faster handshakes.

Acknowledgment Ranges: 256 vs 3

TCP’s Selective Acknowledgments (SACK) option can encode at most three ranges of received data. When packet loss is sparse, this suffices. In highly lossy environments—satellite links, congested mobile networks, or intercontinental paths—three ranges cannot capture the receiver’s actual state. The sender must wait for cumulative acknowledgments or retransmit data that was already received.

QUIC ACK frames encode up to 256 acknowledgment ranges in a single frame. Each range specifies a contiguous block of received packet numbers. This granularity allows senders to precisely identify lost packets and retransmit only what’s necessary, even when dozens of packets have been lost across a large window.

The packet number space itself differs fundamentally from TCP. QUIC uses monotonically increasing 62-bit packet numbers that never wrap. Retransmitted data goes into new packets with new numbers—the protocol never reuses a sequence number. This eliminates ambiguous ACKs that plague TCP when sequence numbers wrap after 4GB of data transfer.

Deployment Reality: Not All QUIC is Equal

The HTTP/3 adoption statistics—36% of websites—mask significant variation in implementation quality. A server supporting HTTP/3 might lack connection migration, use suboptimal congestion control, or suffer from inefficient packet pacing. Chrome’s QUIC implementation uses CUBIC by default; some CDNs have switched to BBR. The choice of congestion control algorithm can swing performance by 20-40% depending on network conditions.

Image source: W3Techs

Server implementations also vary widely in their handling of 0-RTT data. Some accept all early data; others reject it by default due to replay concerns. Some implementations validate paths aggressively during migration; others silently drop migrated connections. This heterogeneity makes performance comparisons difficult—QUIC on Cloudflare behaves differently from QUIC on Google, which behaves differently from QUIC on a self-hosted nginx with quiche.

The Middlebox Problem

QUIC runs over UDP port 443, a choice that bypasses most firewall restrictions. However, some enterprise networks block UDP entirely, forcing HTTP/3 to fall back to HTTP/2 over TCP. More insidiously, middleboxes may throttle UDP traffic to lower priority, assuming it’s low-value protocols like DNS or gaming traffic rather than critical web data.

NAT devices present another challenge. TCP connections maintain state through explicit SYN/FIN handshakes; NATs can track connection lifetime precisely. UDP has no such signaling. NATs typically use idle timeouts (30-120 seconds) to garbage-collect UDP bindings. When a NAT rebinds—assigning a new external port mid-session—QUIC’s connection ID mechanism allows the session to continue, but the server must validate the new path through the challenge mechanism, adding latency.

What QUIC Means for the Future

QUIC’s design philosophy—encrypt everything, run in userspace, enable migration—represents a shift toward application-controlled networking. The transport layer is no longer a kernel black box; it’s application code that can be versioned, rolled back, and A/B tested. Future protocols will likely extend this pattern: application-layer congestion control, custom retransmission strategies, and protocol extensions deployed independently of operating systems.

The standardization process itself has evolved. QUIC went from Google’s internal experiment (2012) to IETF working group (2015) to RFC (2021) in under a decade—remarkably fast for internet standards. The open development model, with multiple independent implementations testing interoperability at each draft, produced a more robust specification than the closed TCP standardization of the 1980s.

For developers building networked applications, QUIC offers capabilities impossible with TCP: seamless mobile handoffs, encrypted transport metadata, and fine-grained stream prioritization. The learning curve is steeper—QUIC exposes more knobs and requires more application-level decisions—but the performance ceiling is higher. As browser support approaches universality and server implementations mature, the question shifts from “should we use QUIC?” to “how do we optimize for QUIC?”

References

-

Iyengar, J., & Thomson, M. (2021). RFC 9000: QUIC: A UDP-Based Multiplexed and Secure Transport. IETF. https://datatracker.ietf.org/doc/html/rfc9000

-

Bishop, M. (2022). RFC 9114: HTTP/3. IETF. https://www.ietf.org/rfc/rfc9114.html

-

Cloudflare. (2020). Comparing HTTP/3 vs. HTTP/2 Performance. Cloudflare Blog. https://blog.cloudflare.com/http-3-vs-http-2/

-

Huston, G. (2022). Comparing TCP and QUIC. APNIC Blog. https://blog.apnic.net/2022/11/03/comparing-tcp-and-quic/

-

Salesforce Engineering. (2022). The Full Picture on HTTP/2 and HOL Blocking. https://engineering.salesforce.com/the-full-picture-on-http-2-and-hol-blocking-7f964b34d205/

-

Zirngibl, J., et al. (2024). An Analysis of QUIC Connection Migration in the Wild. arXiv. https://arxiv.org/html/2410.06066v1

-

W3Techs. (2024). Usage Statistics of HTTP/3 for Websites. https://w3techs.com/technologies/details/ce-http3

-

Cloudflare. (2018). The Road to QUIC. Cloudflare Blog. https://blog.cloudflare.com/the-road-to-quic/

-

Fischlin, M., & Günther, F. (2017). Replay Attacks on Zero Round-Trip Time. IEEE EuroS&P. https://eprint.iacr.org/2017/082.pdf

-

IETF. (2021). RFC 9001: Using TLS to Secure QUIC. https://datatracker.ietf.org/doc/html/rfc9001