A financial services company migrated their payment processing to a message queue architecture. The design seemed straightforward: producers publish payment requests, workers consume and process them. Six months later, they discovered their customers had been double-charged for approximately 3% of transactions. The queue was working exactly as configured—the problem was that “working” meant something different than they expected.

Message queues occupy a strange position in distributed systems. They appear deceptively simple on the surface: put message in, get message out. But beneath that simplicity lies a maze of trade-offs involving durability, ordering, delivery guarantees, and failure modes. Understanding these trade-offs isn’t academic—it’s the difference between a reliable system and one that silently corrupts data.

Two Fundamentally Different Philosophies

Not all message queues are built the same way. The architectural divide between log-based systems (Kafka) and exchange-based systems (RabbitMQ) reflects fundamentally different assumptions about what messaging means.

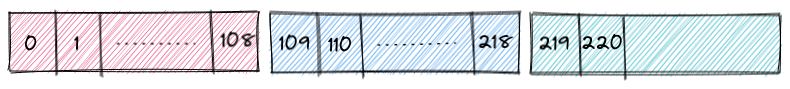

Kafka treats messages as an immutable log. When a producer sends a message, Kafka appends it to a partition—a sequential, append-only file. Messages aren’t removed when consumed; they persist until retention policies delete them. Multiple consumers can read the same message at different times, at different offsets, for different purposes. This design enables event sourcing, log compaction, and replay scenarios that are impossible with traditional queues.

RabbitMQ takes a more traditional approach. Messages flow through exchanges—routing entities that distribute copies to bound queues based on routing rules. When a consumer acknowledges a message, RabbitMQ removes it from the queue. The message ceases to exist in the broker. This model excels at work distribution and point-to-point communication but makes replay impossible without external mechanisms.

Image source: RabbitMQ - AMQP 0-9-1 Model Explained

The practical implications are significant. In Kafka, a message’s position (offset) identifies it uniquely. In RabbitMQ, messages are transient—you cannot reference a message after it’s been consumed. This affects everything from debugging to audit trails to exactly-once processing strategies.

Durability: What “Persistent” Actually Means

Both systems offer durability, but the guarantees differ in subtle but critical ways.

Kafka’s durability comes from its write-ahead log architecture. Each partition is a sequence of segment files stored on disk. When a producer sends a message with acks=all, the leader broker waits for all in-sync replicas to acknowledge the write before responding to the producer. The message is persisted to disk on multiple brokers before the producer considers it sent.

The segment structure matters for performance and retention. Kafka doesn’t write individual messages to individual files. Instead, it appends messages to an active segment file until that segment reaches a size threshold (default 1 GB) or time threshold (default 7 days). Then it rolls to a new segment.

Image source: Strimzi - Deep Dive into Kafka Storage Internals

This design has a counterintuitive implication: retention time is not precise. If you configure 10-minute retention, a message might survive for 25 minutes or more. The segment must close before deletion, the cleanup thread runs periodically, and there’s a delay between marking files deleted and actual removal. The retention configuration provides a lower bound, not an upper bound.

RabbitMQ’s durability operates differently. A message marked as persistent is written to disk before acknowledgment. But here’s the nuance: durability requires both a durable queue and a persistent message. A persistent message sent to a transient queue won’t survive a broker restart. A transient message sent to a durable queue won’t survive either. Both conditions must be true.

The performance cost of persistence in RabbitMQ is substantial—writes to disk are orders of magnitude slower than memory operations. A system that processes 100,000 messages per second with transient messages might drop to 10,000 with persistent messages, depending on disk performance and message size.

Ordering: The Illusion of Sequence

Here’s a statement that sounds obvious: “messages are processed in order.” Here’s the reality: global ordering is prohibitively expensive in distributed systems, and even local ordering has surprising edge cases.

Kafka guarantees ordering within a single partition. If producer sends messages A, B, C to partition 0, any consumer reading that partition will see A, B, C in that sequence. But this guarantee has a price: a partition can only be written by one broker (the leader) and consumed by one consumer within a consumer group at a time.

When you need to scale beyond a single partition, ordering becomes partition-local. Messages in partition 0 have an order. Messages in partition 1 have an order. But there’s no defined ordering between partition 0 and partition 1. If you need total ordering for a stream of events—say, all events for user 12345—your only option is to partition by user ID, ensuring all events for that user land in the same partition.

RabbitMQ offers FIFO ordering within a single queue. But the exchange routing layer sits between producers and queues, and that’s where ordering can break down. Consider a topic exchange routing messages to multiple queues based on routing keys. A producer sends messages A (routing key: “user.1”), B (routing key: “user.2”), C (routing key: “user.1”). Messages A and C go to the queue bound to “user.*”. Message B goes elsewhere. The queue preserves order of A and C, but if you’re trying to maintain global order across multiple queues, you can’t.

The fundamental constraint is this: ordering requires serialization. To guarantee that operation A happens before operation B, you must serialize them through a single processing path. Parallelism and ordering are fundamentally in tension.

Delivery Semantics: Choose Your Poison

Message delivery semantics form a trilemma: at-most-once, at-least-once, and exactly-once. You cannot have all three properties simultaneously.

At-most-once delivery means a message is delivered zero or one times. The producer sends a message and immediately considers it sent, without waiting for acknowledgment. If the broker crashes before persisting the message, or if the network drops it, the message is lost forever. The advantage: maximum throughput, minimum latency. The disadvantage: data loss is possible.

At-least-once delivery means a message is delivered one or more times. The producer sends a message, waits for acknowledgment, and retries if acknowledgment doesn’t arrive. If the broker receives and persists the message, but the acknowledgment is lost before reaching the producer, the producer will retry, causing a duplicate. The advantage: no data loss. The disadvantage: duplicates are possible.

Exactly-once delivery means a message is delivered exactly one time. This is the holy grail—and it’s also the most misunderstood.

The impossibility of exactly-once delivery in the general case has been proven mathematically. In a distributed system with unreliable networks, you cannot distinguish between “the receiver crashed before processing” and “the receiver processed but the acknowledgment was lost.” The producer cannot know whether to retry or not.

What Kafka actually provides is exactly-once processing semantics within its boundaries. This works through a combination of idempotent producers and transactions. When you enable idempotence (enable.idempotence=true), Kafka assigns each producer a unique Producer ID (PID) and tracks sequence numbers for each partition. If a producer retries a batch, the broker recognizes the PID + sequence number combination and rejects the duplicate.

The performance cost of exactly-once processing in Kafka is surprisingly modest—about 3% overhead compared to at-least-once for the same ordering guarantees. But the commit interval affects latency: consumers can only see committed transactions, so shorter commit intervals mean lower latency but smaller batches.

The Consumer Group Rebalancing Problem

Kafka’s consumer groups enable parallel processing: each consumer in a group handles a subset of partitions. But when consumers join or leave the group, Kafka must rebalance partition assignments. During rebalancing, the entire group stops processing.

The rebalancing process works like this: when a consumer joins or leaves, the group coordinator (a broker) triggers a rebalance. All consumers revoke their current partition assignments, join a rebalance session, and receive new assignments. This process takes time—often hundreds of milliseconds to seconds—and during that time, no messages are processed.

For consumer groups processing high-volume streams, frequent rebalances can significantly impact throughput. The solution is to minimize rebalances: use static membership (consumers rejoin with the same instance ID after restart), increase session timeouts (consumers can be unreachable longer before triggering rebalance), and use cooperative sticky assignors (which minimize partition movement during rebalances).

Dead Letter Queues: Where Failed Messages Go

When a message cannot be processed—due to a poison pill, a schema mismatch, or a persistent downstream failure—it needs somewhere to go. Dead letter queues (DLQs) capture these failed messages for later inspection or retry.

Both Kafka and RabbitMQ support DLQ patterns, but the implementations differ. RabbitMQ can automatically route messages that fail to a dead letter exchange when they expire or are rejected with requeue=false. Kafka doesn’t have built-in DLQs; instead, applications must implement them explicitly by publishing failed records to a dedicated topic.

The key design decisions for DLQs are:

-

When to give up: After how many retries should a message go to the DLQ? Exponential backoff helps handle transient failures, but permanent failures need a cutoff.

-

What metadata to preserve: Original timestamp, failure reason, retry count, stack trace—this information is crucial for debugging and potential reprocessing.

-

How to handle DLQ overflow: If the DLQ itself fills up, what happens? Alerting and automatic scaling are common approaches.

-

When to retry from DLQ: Manual intervention? Scheduled retries? Circuit breaker patterns?

The financial services company mentioned earlier had a DLQ but never checked it. The duplicate charges came from messages that were retried after acknowledgment but before processing completed—a gap in their exactly-once implementation.

The Performance Reality

Benchmarks comparing Kafka and RabbitMQ often show dramatic differences: Kafka reaching millions of messages per second, RabbitMQ in the tens of thousands. But these numbers require context.

Kafka achieves high throughput through batching and sequential I/O. A single Kafka message isn’t particularly fast—there’s overhead in the producer, the broker, the replication protocol. But when you batch thousands of messages together and write them sequentially to disk, throughput soars. The latency-per-message can be high (tens to hundreds of milliseconds), but aggregate throughput is enormous.

RabbitMQ excels at low-latency message delivery for smaller batch sizes. Individual messages can be routed and delivered in milliseconds. But as throughput increases, latency degrades. The sweet spot depends on your workload: RabbitMQ for real-time, low-volume messaging; Kafka for high-throughput, high-volume streaming.

Compression affects this trade-off. Kafka supports gzip, snappy, lz4, and zstd compression. For text-heavy payloads like JSON or logs, compression ratios of 5x-10x are common. This multiplies effective throughput and reduces storage costs. The trade-off is CPU usage on producers and consumers. For I/O-bound workloads, the CPU cost is usually worth it. For CPU-bound workloads, it might not be.

The Missing Piece: Observability

Message queues are invisible infrastructure. When they work, nobody notices. When they fail, everything breaks. This invisibility makes observability critical.

The metrics that matter:

- Producer metrics: Record send rate, record error rate, record latency, compression ratio, batch size

- Broker metrics: Messages in/out per second, bytes in/out per second, partition count, under-replicated partitions, disk usage

- Consumer metrics: Consumer lag, consumer latency, commit rate, rebalance frequency

Consumer lag is particularly critical. It measures how far behind a consumer is from the latest message. A consumer with lag of 1 million messages is 1 million messages behind real-time. If that lag is growing, the consumer is falling further behind. If it’s stable, the consumer is keeping pace but not catching up. If it’s shrinking, the consumer is recovering.

But lag alone isn’t enough. A consumer processing 1 million messages per second with lag of 1 million is seconds behind. A consumer processing 10 messages per second with lag of 1 million is days behind. You need lag rate, not just lag magnitude.

What to Actually Do

Message queues are not commodities. The choice between Kafka and RabbitMQ isn’t about which one is “better”—it’s about which one matches your problem.

Choose Kafka when you need:

- High throughput event streaming

- Message replay capability

- Long-term message retention

- Event sourcing patterns

- Stream processing integration

Choose RabbitMQ when you need:

- Complex routing logic (topic exchanges, fanout, headers)

- Lower latency for individual messages

- Simpler operational model

- Traditional work queue patterns

- Request-reply messaging patterns

Neither system is a silver bullet. Both require understanding their guarantees, their failure modes, and their operational characteristics. The engineers who build reliable systems are those who look past the simple “put message in, get message out” abstraction to understand what’s actually happening underneath.

The financial services company eventually fixed their double-charging problem. They implemented idempotent consumers using unique transaction IDs, switched to exactly-once processing for their Kafka streams, and added comprehensive monitoring. But the cost was significant—not just in engineering time, but in customer trust. The queue had been “working” the whole time. They just hadn’t understood what “working” meant.

References

- Kreps, J., Narkhede, N., Rao, J. (2011). “Kafka: A Distributed Messaging System for Log Processing.” NetDB.

- Confluent. “Exactly-Once Semantics Are Possible: Here’s How Kafka Does It.” https://www.confluent.io/blog/exactly-once-semantics-are-possible-heres-how-apache-kafka-does-it/

- RabbitMQ Documentation. “AMQP 0-9-1 Model Explained.” https://www.rabbitmq.com/tutorials/amqp-concepts

- Strimzi. “Deep Dive into Apache Kafka Storage Internals.” https://strimzi.io/blog/2021/12/17/kafka-segment-retention/

- Confluent. “Benchmarking Apache Kafka, Pulsar, and RabbitMQ: Latency, Throughput, and Hardware.” https://www.confluent.io/blog/kafka-fastest-messaging-system/

- Apache Kafka Documentation. “Log Implementation.” https://kafka.apache.org/documentation/#impl_log

- Denning, P. J. (1968). “The Working Set Model for Program Behavior.” Communications of the ACM.

- Kleppmann, M. (2017). “Designing Data-Intensive Applications.” O’Reilly Media.

- Van Lightly, J. “RabbitMQ vs Kafka Part 4: Message Delivery Semantics and Guarantees.” https://jack-vanlightly.com/blog/2017/12/15/rabbitmq-vs-kafka-part-4-message-delivery-semantics-and-guarantees

- Confluent. “Apache Kafka Message Compression.” https://www.confluent.io/blog/apache-kafka-message-compression/