In 2006, Amazon discovered something that would reshape how the industry thinks about performance: every 100 milliseconds of latency cost them 1% in sales. That same year, Google found that adding just 500 milliseconds of delay to search results caused a 20% drop in traffic. These weren’t hypothetical concerns—they were measured impacts on real revenue.

The physics of the internet imposes hard constraints. Light travels through fiber optic cable at roughly two-thirds its speed in vacuum—approximately 200,000 kilometers per second. A round trip from New York to Singapore covers about 30,000 kilometers of fiber, which means a theoretical minimum latency of 150 milliseconds just for light to make the journey. Add network equipment, routing hops, and protocol overhead, and real-world latency easily exceeds 200 milliseconds.

This is the fundamental problem that Content Delivery Networks (CDNs) exist to solve: you cannot make light travel faster, but you can shorten the distance it needs to travel.

The Edge Paradox: Why Proximity Trumps Bandwidth

A common misconception about CDNs is that they primarily optimize bandwidth. In reality, bandwidth is rarely the bottleneck for typical web content. A 2MB image downloads in 16 milliseconds over a gigabit connection—the problem is that establishing the connection and requesting the image might take 200 milliseconds or more.

The TCP slow-start algorithm illustrates this vividly. When a client first connects to a server, TCP starts with a small congestion window—typically 10 segments in modern implementations—and doubles it with each successful acknowledgment. For a server across the world with a 200ms round-trip time, the client might spend 600-800 milliseconds just ramping up to full bandwidth utilization. During that time, the connection is artificially throttled not by bandwidth limitations, but by distance.

CDNs solve this by terminating connections at edge locations close to users. Instead of crossing continents, a user’s request travels perhaps 20 kilometers to a nearby point of presence (PoP). The TCP handshake completes in under 5 milliseconds. The slow-start ramp-up finishes in tens of milliseconds. Content that would have taken 800 milliseconds to even begin loading now starts streaming almost immediately.

Image source: Cloudflare Developers

Anycast: One IP Address, Hundreds of Destinations

The routing magic that enables this proximity is called Anycast. Unlike traditional Unicast routing, where each server has a unique IP address, Anycast allows multiple servers at different geographic locations to share the same IP address.

When a user’s browser resolves a CDN hostname, it receives an IP address. But this single IP address doesn’t point to one location—it’s simultaneously announced by hundreds of data centers around the world. The Border Gateway Protocol (BGP), which governs how traffic flows between networks, automatically routes the user’s packets to the “nearest” location based on routing metrics rather than geographic distance.

Consider what happens during a request to Cloudflare’s network. A user in São Paulo connects to Cloudflare’s 1.1.1.1 DNS resolver. The same IP address is announced by Cloudflare data centers in São Paulo, Rio de Janeiro, Buenos Aires, Miami, and dozens of other locations. BGP examines its routing tables and sends the traffic to the São Paulo PoP—not because it’s geographically closest, but because it has the best routing metrics from the user’s ISP.

This architecture has profound implications beyond performance. During a DDoS attack generating traffic from thousands of compromised machines, the attack traffic automatically disperses across hundreds of PoPs worldwide. Each location absorbs a fraction of the attack, making the total network capacity far greater than any single attack volume. An attacker would need to generate more traffic than the entire CDN network can handle—petabits per second—to overwhelm it.

The Cache Hierarchy: Not All Misses Are Equal

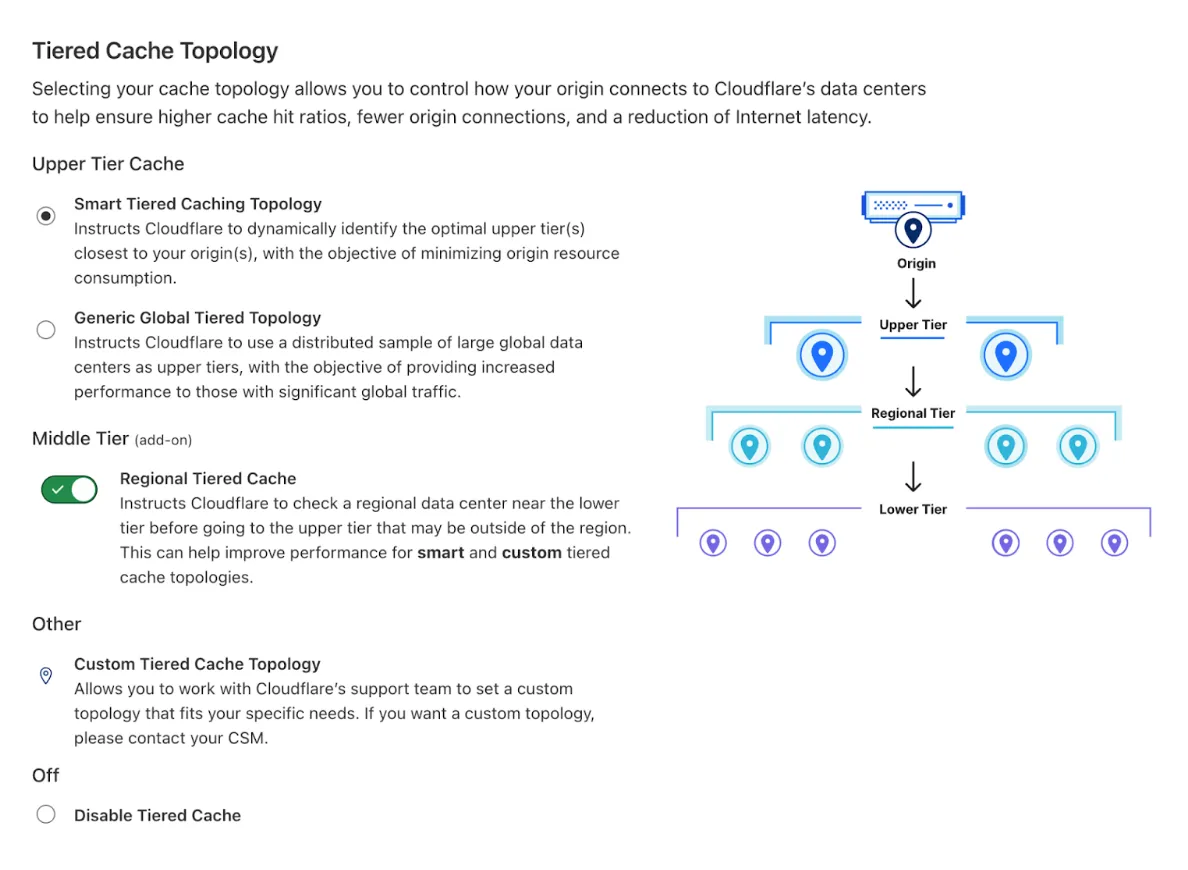

Caching is the most visible CDN feature, but the implementation is more sophisticated than a simple key-value store at the edge. Modern CDNs implement hierarchical caching with multiple tiers, each serving a specific purpose.

The first tier consists of edge servers—the PoPs closest to users. These handle the vast majority of requests and cache the most frequently accessed content. But edge servers can’t cache everything; with petabytes of content across the internet, they must be selective about what they store.

When an edge server doesn’t have requested content (a cache “miss”), it doesn’t immediately contact the origin server. Instead, it queries an upper-tier cache—a mid-layer server positioned strategically between edges and origins. This mid-tier caches content that was requested by multiple edges, acting as a regional aggregation point. Only if the mid-tier also lacks the content does the request propagate to the origin.

Cloudflare’s Tiered Cache implementation demonstrates this in practice. With their network of 330 cities, an edge server in a small market might have limited cache capacity. When it misses, it queries a regional upper-tier—perhaps in a major hub like Frankfurt or Singapore. That upper-tier, serving dozens of smaller edges, maintains a much larger cache. A piece of content requested by one user in Vienna and another in Munich might both be served from the Frankfurt upper-tier, avoiding two separate origin fetches.

The mathematics of cache hierarchies reveal their value. Consider a CDN with 200 edge PoPs and an origin server. Without tiered caching, a cacheable object not present at any edge could trigger up to 200 separate origin requests—one from each edge that receives a request for it. With a mid-tier layer of 20 regional caches, each serving 10 edges, the same scenario generates at most 20 origin requests. The mid-tier absorbs 90% of the origin fetches that would otherwise occur.

Beyond Static Content: Dynamic Content Acceleration

CDNs excel at caching static assets—images, CSS, JavaScript—but modern web applications increasingly serve dynamic, personalized content that can’t be cached. This doesn’t mean CDNs provide no value for such content.

TCP and TLS connection establishment accounts for significant latency, particularly for short-lived connections. A typical TLS 1.3 handshake requires one round trip. TCP adds another. For a user 200 milliseconds from the origin, establishing a secure connection costs at least 400 milliseconds before any data transfers.

CDNs optimize this through persistent connection pooling. Edge servers maintain long-lived connections to origins, handling thousands of requests over a single TCP connection. When a user connects to the edge—with perhaps 10ms latency—their TLS handshake completes almost instantly. The edge then reuses an existing connection to the origin, eliminating repeated handshake overhead.

TLS session resumption further reduces overhead. When a client reconnects to an edge server they’ve previously visited, they can resume the TLS session using a pre-shared key. The handshake completes in zero round trips instead of one. For users on mobile networks with variable connectivity—where connections frequently drop and reconnect—this can cut connection latency by more than half.

Protocol Optimizations: HTTP/2, HTTP/3, and QUIC

HTTP/2 introduced multiplexing—the ability to send multiple requests over a single TCP connection without waiting for responses. This eliminated the head-of-line blocking that plagued HTTP/1.1, where each request had to complete before the next could begin.

But HTTP/2 still runs over TCP, and TCP has its own head-of-line blocking problem. When a TCP packet is lost, all subsequent packets must wait for retransmission—even if they belong to a different HTTP stream. On lossy networks (mobile, public Wi-Fi), this can cause significant delays.

HTTP/3 and QUIC solve this by replacing TCP with UDP at the transport layer. QUIC implements its own congestion control and reliability mechanisms, but crucially, it handles each stream independently. A lost packet affects only the stream it belongs to; other streams continue uninterrupted.

CDNs were early adopters of HTTP/3. Cloudflare enabled QUIC support in 2019, and by 2023, over 25% of HTTP requests to their network used HTTP/3. The benefits are most pronounced for users on mobile networks, where packet loss rates are higher and the independent stream handling of QUIC provides measurable latency improvements.

sequenceDiagram

participant Client

participant Edge

participant UpperTier

participant Origin

Client->>Edge: Request for content

alt Cache Hit at Edge

Edge-->>Client: Serve from cache

else Cache Miss at Edge

Edge->>UpperTier: Check upper tier

alt Cache Hit at Upper Tier

UpperTier-->>Edge: Return content

Edge-->>Client: Serve content

else Cache Miss at Upper Tier

UpperTier->>Origin: Fetch from origin

Origin-->>UpperTier: Return content

UpperTier-->>Edge: Cache and forward

Edge-->>Client: Serve content

end

end

Compression: When Smaller Means Faster

CDNs apply compression to reduce transfer sizes, but the choice of algorithm involves trade-offs. Gzip has been the standard for decades, offering reasonable compression at low computational cost. Brotli, developed by Google and released in 2015, achieves 14-21% better compression ratios than Gzip for typical web content.

The trade-off is computational cost. Brotli at quality level 11 (maximum compression) requires significantly more CPU time than Gzip at equivalent levels. For a CDN handling millions of requests per second, this matters. Most CDNs use Brotli at moderate quality levels (4-6) where the compression advantage is still significant (roughly 15-18% over Gzip) but the CPU cost remains manageable.

The compression choice also depends on content type. Brotli excels at compressing text-based content—HTML, CSS, JavaScript—where it can leverage its built-in dictionary of common web terms. For binary content like images, the difference is negligible because images are already compressed with specialized algorithms (JPEG, PNG, WebP).

Cache Invalidation: The Hardest Problem in Distributed Systems

Phil Karlton famously said, “There are only two hard things in Computer Science: cache invalidation and naming things.” CDNs grapple with the first problem constantly.

The naive approach—setting a Time To Live (TTL) and waiting for content to expire—works for static assets that rarely change. But news sites, e-commerce platforms, and social media need content updates to propagate within seconds or minutes, not hours.

Purge APIs allow explicit cache invalidation. When content changes at the origin, the application calls the CDN’s purge API to remove the cached version. Modern CDNs propagate purges globally within seconds—Cloudflare claims their purge API reaches all PoPs in under 30 seconds.

But purging has costs. Every purge removes content that might have been useful to other users. A popular article updated for a typo correction triggers a global purge, and subsequent requests from users worldwide must fetch from the origin until the cache rebuilds. Smart invalidation—purging only what changed rather than entire caches—becomes crucial at scale.

Origin Shield: Protecting the Backend

A poorly configured CDN can paradoxically increase origin load. Consider an edge network with 200 PoPs, each receiving a request for uncached content within seconds of each other. Without protection, the origin receives 200 simultaneous requests—a “thundering herd” that can overwhelm capacity.

Origin Shield addresses this by designating a single PoP as the caching layer for the origin. All other PoPs must fetch through this shield. When multiple edges request the same content, only one request reaches the origin; the rest are served from the shield’s cache.

AWS CloudFront’s Origin Shield, enabled in the optimal region, reduced origin requests by 94% for one streaming service. The cost savings were substantial—origin egress traffic dropped by a factor of 15, and the origin server could serve the same traffic with far fewer resources.

The Security Layer: Defense at the Edge

CDNs have evolved beyond acceleration into security platforms. The same edge infrastructure that brings content closer to users also provides a defensive perimeter against attacks.

Web Application Firewalls (WAFs) at the edge inspect incoming requests for attack patterns—SQL injection, cross-site scripting, path traversal—before they reach the origin. Rules can be updated globally within seconds, responding to newly discovered vulnerabilities faster than individual applications could patch.

Bot detection systems analyze traffic patterns to distinguish legitimate users from automated scripts. A CDN with visibility into billions of daily requests can identify bot behavior—request frequency, header patterns, TLS fingerprinting—and challenge or block suspicious traffic. Some advanced systems even detect headless browsers through JavaScript execution tests, challenging traffic that claims to be from a real browser but fails to execute JavaScript as expected.

Performance Metrics: Measuring What Matters

Cache hit ratio—the percentage of requests served from cache—is the most commonly cited CDN metric, but it can be misleading. A 99% hit ratio sounds impressive, but if the 1% of misses are for large video files while the hits are tiny JavaScript files, the origin still handles most of the bandwidth.

Origin offload provides a more complete picture. This metric measures the percentage of bytes served from cache versus the total bytes delivered. A 90% origin offload means the origin transmitted only 10% of the data ultimately delivered to users—the CDN handled the remaining 90%.

Time to First Byte (TTFB) measures how quickly the server begins sending data. For cached content, this should be under 50 milliseconds for users near an edge PoP. Higher TTFB for cached content suggests misconfiguration or routing problems.

Trade-offs and Costs

CDNs aren’t a universal solution. The architecture introduces complexity—another layer of caching to manage, another potential point of failure. Configuration mistakes can propagate globally, affecting all users simultaneously rather than just one region.

Cost structures also differ from direct origin hosting. CDNs typically charge for bandwidth and requests, which can become expensive for high-traffic sites. However, the origin bandwidth savings often offset CDN costs. A site with 95% cache hit ratio pays the CDN for 100% of bandwidth but only 5% of origin bandwidth—substantial savings when origin egress is priced per gigabyte.

Dynamic content presents challenges. Personalized responses can’t be cached, so each request traverses to the origin. The CDN still provides value through connection optimization and security, but the latency benefits are modest compared to static content caching.

The Evolution Continues

CDNs are evolving into edge computing platforms. Rather than just caching and serving content, modern CDNs execute code at the edge—redirecting requests, transforming responses, implementing authentication logic. Cloudflare Workers, AWS Lambda@Edge, and similar services allow developers to deploy JavaScript functions to hundreds of locations worldwide.

This shifts computation closer to users. An API endpoint that performs authentication and database queries can execute at the edge, reading from a globally distributed database. The user experiences tens of milliseconds of latency instead of hundreds—authentication that once required a round-trip to a central data center now happens within the same city.

The original CDN promise remains unchanged: reduce the distance data must travel. But the implementation has grown from simple caching to a full distributed computing platform. Understanding the architecture—Anycast routing, cache hierarchies, protocol optimizations, security layers—enables better utilization of these systems. The speed of light remains constant, but the distance we can travel in that time continues to shrink.

References

- Kohavi, R., & Longbotham, R. (2007). Online Experiments: Lessons Learned. IEEE Computer, 40(9), 103-105.

- Wessels, D., & Claffy, K. (1998). ICP and the Squid Web Cache. IEEE Journal on Selected Areas in Communications, 16(3), 345-357.

- Pathan, M., & Buyya, R. (2007). A Taxonomy and Survey of Content Delivery Networks. Grid Computing and Distributed Systems Laboratory, University of Melbourne.

- Nygren, E., Sitaraman, R. K., & Sun, J. (2010). The Akamai Network: A Platform for High-Performance Internet Applications. ACM SIGOPS Operating Systems Review, 44(3), 2-19.

- Cloudflare. (2025). Tiered Cache Documentation. Retrieved from https://developers.cloudflare.com/cache/how-to/tiered-cache/

- Cloudflare. (2025). Global Network Map. Retrieved from https://www.cloudflare.com/network/

- Fastly. (2024). Origin Shield: How Shielding Improves Performance. Retrieved from https://www.fastly.com/blog/let-the-edge-work-for-you-how-shielding-improves-performance

- Akamai. (2017). State of Online Retail Performance. Akamai Technologies.

- Google. (2015). QUIC: A UDP-Based Secure and Reliable Transport for HTTP/2. IETF Draft.

- Alzoubi, H. A., et al. (2018). Dissecting Latency in the Internet’s Fiber Infrastructure. arXiv preprint arXiv:1811.02900.